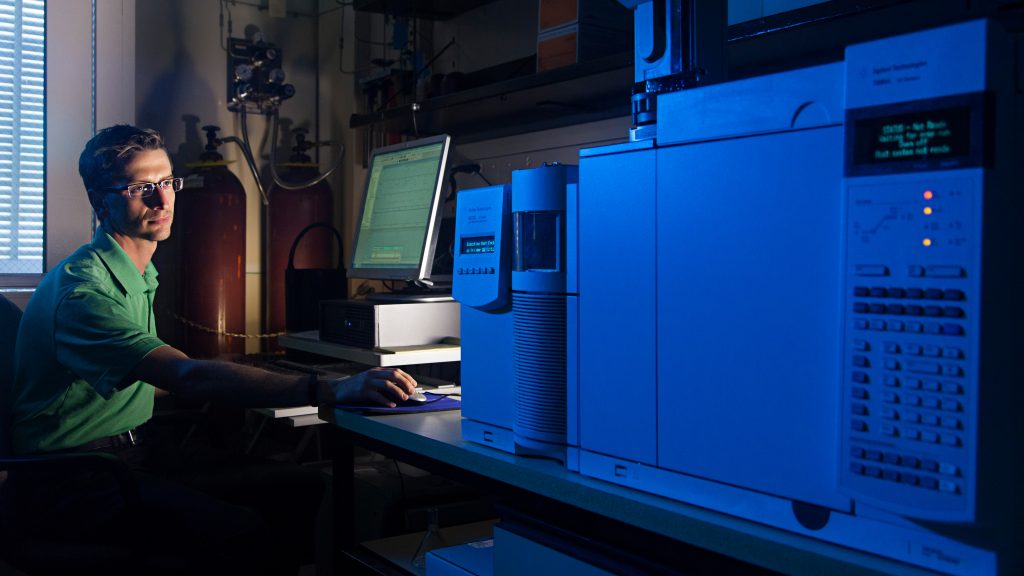

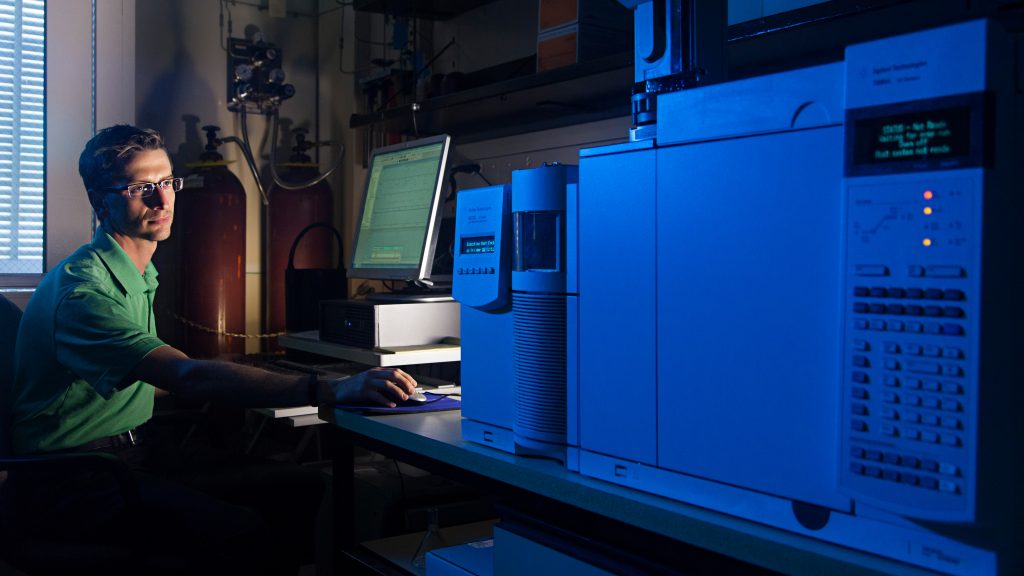

Laboratories

Rapid access to a diversity of expertise and technical resources for chemical process development efforts.

Learn MoreAVN is a leading chemical and advanced systems technology company that delivers solutions to hard problems from concept to commercialization.

What we do

AVN’s deep technical heritage and uncommon lab and pilot plant facilities provide the talent and resources that chemical and energy companies need to develop, improve and scale chemical processes while minimizing cost and risk.

Organizations needing advanced systems or software development services can better manage schedule and cost by working with AVN and, simultaneously, tap into AVN’s expertise in turning data into actionable knowledge.

Business UnitsLaboratories

Rapid access to a diversity of expertise and technical resources for chemical process development efforts.

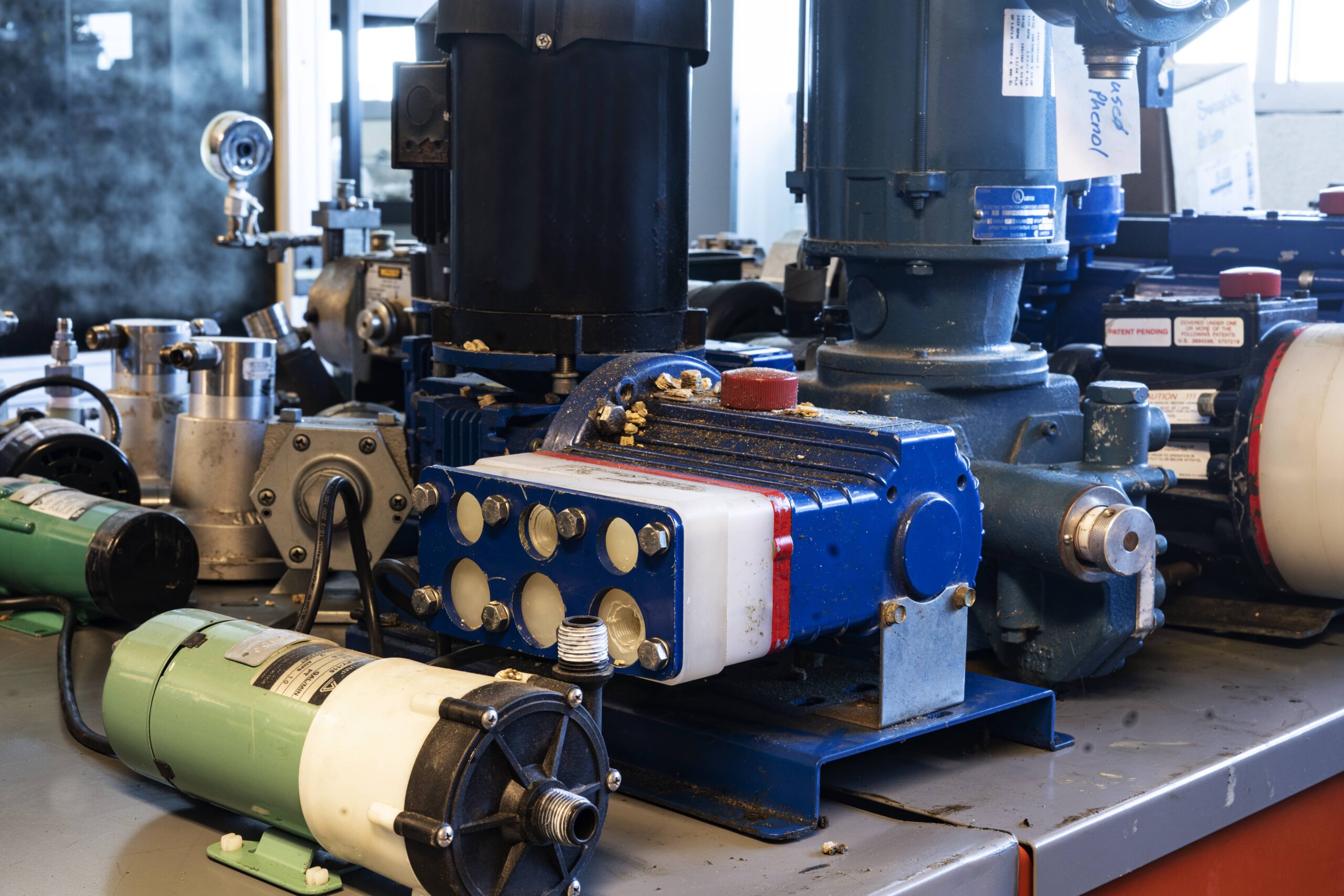

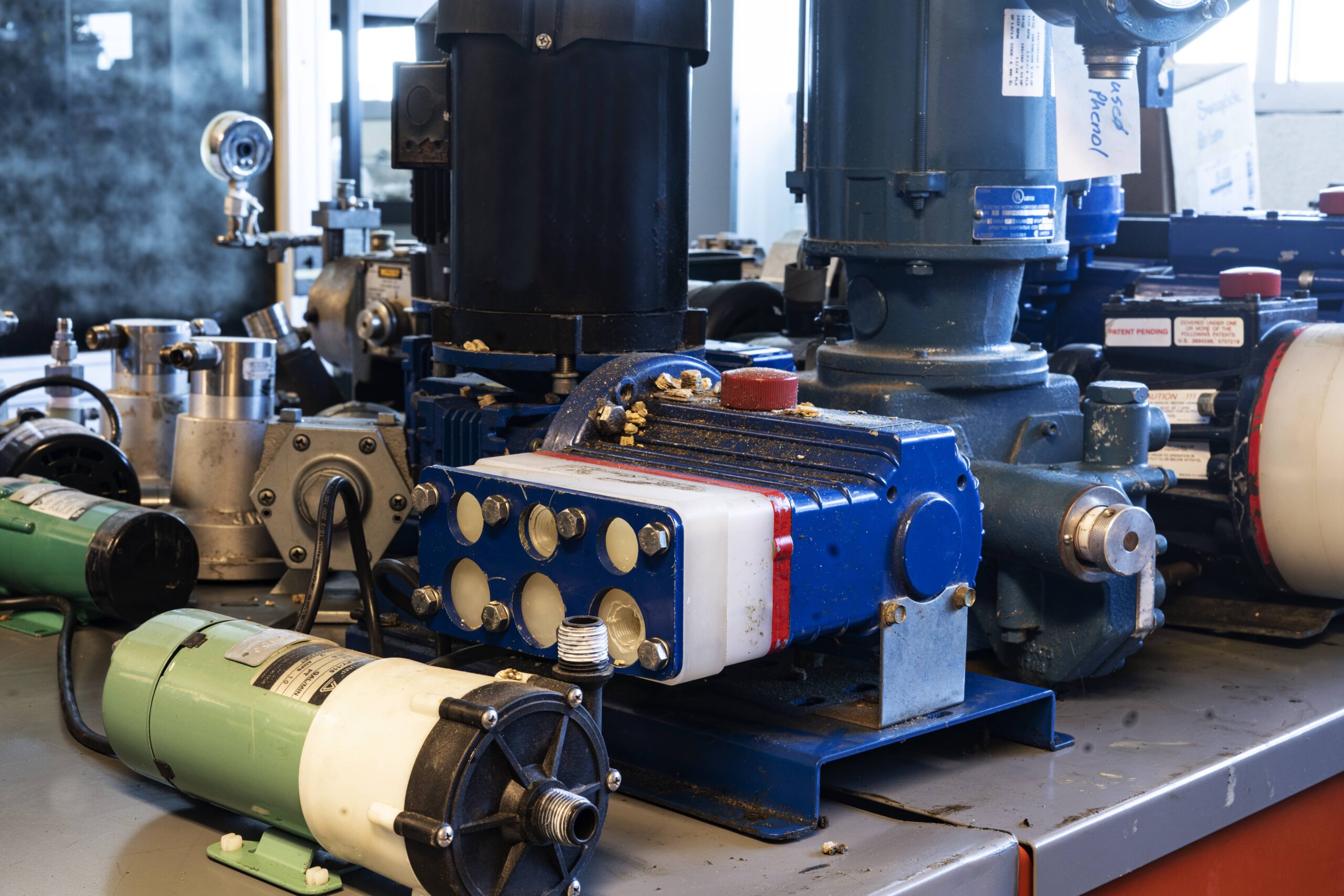

Learn MorePilot Plants

Powerful, proven and established technology development and improvement methodologies to increase return on investment.

Learn MoreAdvanced Software Technologies

AVN’s Advanced Software Technologies group provides customers with unique offerings to specifically meet their needs.

Learn MoreSpecialty and Custom Chemicals Manufacturing

Infrastructure, technology development methodologies and in-depth experience to produce commercial quantities of specialty and fine chemicals.

Learn More

AVN focuses on moving your idea into the marketplace through innovation. Our intensive focus includes the elements of informed planning, efficient and effective collaboration, data–based decision making, and disciplined urgency.

Delivering value over and above a customer’s expectation is always our goal.

What AVN can provide to customers and how that is accomplished is the basis of the AVN business model.

Why AVN?

Your aspiration meets innovation

Discover how it works

01

Uncommon expertise and infrastructure

Rapid access to a diversity of expertise and technical resources.

02

AVN is independent

Unbiased expertise with flexible service agreements and arrangements.

03

Customer Retain Intellectual Property Rights

Clients retain and preserve intellectual property ownership, enabling security and long-term competitive protection.

04

Confidentiality

Multiple checks and balances, including NDAs, protect confidential client information.

AVN is a strategic innovation partner to industry and organizations serving customers on six continents. AVN’s Chemical Technologies business serves mid-sized chemical and energy companies, chemical based new ventures and start-ups, and provides specialized services to large, international chemical and energy companies. AVN’s Advanced Software Technologies Group works closely with Federal Prime Contractors, Federal Agencies and Commercial, Industrial enterprises who seek to turn data into actionable knowledge.

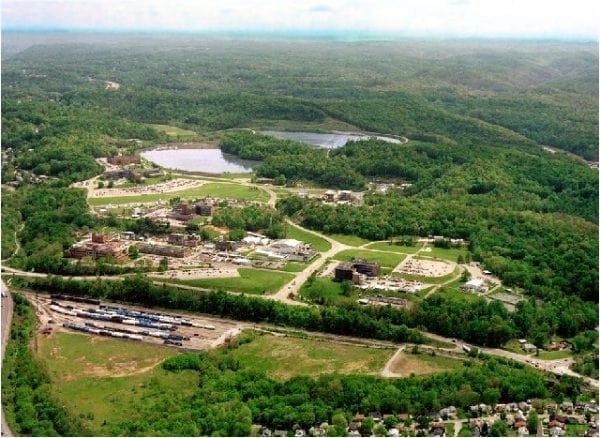

Who We AreHeadquarters and Chemical Process Research and Development

1740 Union Carbide Drive

South Charleston, WV 25303

Advanced Software Technologies

430 Drummond Street, Suite 2

Morgantown, WV 26505

Locations and Business Units

Corporate Headquarters,

Chemical Process Technologies,

Technical Engineering,

Specialty & Custom Chemicals Manufacturing

Physical Address

1740 Union Carbide Drive

South Charleston, WV

25303

Mailing Address

P. O. Box 8396

South Charleston, WV

25303

Locations and Business Units

Advanced Software Technologies

Physical Address

430 Drummond Street, Suite 2

Morgantown, WV

26505

;)